On Siri, Privacy Protection And Our Expectations From Apple

In late July, the news broke out that some contractors working for Apple had access to Siri’s voice recordings from users around the world to help tweak Siri performance. A month later, what happened? Is Apple’s handling of the issue been up to their high standards on privacy protection? Did Apple m

In late July, the news broke out that some contractors working for Apple had access to Siri’s voice recordings from users around the world to help tweak Siri performance. A month later, what happened? Is Apple’s handling of the issue been up to their high standards on privacy protection? Did Apple meet our expectations? Let’s find out.

Privacy protection as a differentiation factor

I’m not surprised by recent news about Amazon having access to voice recordings. Same goes for Google making the news about similar issues. But, the news about Apple using contractors to help them tweak their algorithms? I’m close to speechless.

In recent years, privacy has become a significant differentiator factor for Apple and for many reasons. One of them being the sole existence of Facebook and Google. As we all know, they are collecting all the data they can about us while using their services. Next, they sell this massive amount of data to advertisers. In general, advertising privacy protection is a tricky thing to do for any tech companies these days, including Apple. What happens on your iPhone stays on your iPhone. Well, it is much more complicated than this it seems.

When your company is running marketing campaigns that constantly accentuate a privacy focus, it is incongruent to keep stuff like this in the shadows. Apple didn’t try to hide that this is happening but it didn’t do much to keep people informed either.Benjamin Mayo writing on his personal blog

And

With Apple putting so much marketing and company effort into differentiating itself on privacy, the company must not only do better than “the other guys,” they must be seen to be doing better than the other guys. This incident is not a good look for the companyShawn King for The Loop

In the age of connected devices to the cloud and AI powered services, we may have to accept to lose some privacy along the way. After all, those are so convenient in so many facets of our modern life. Or maybe, just maybe, we should be expecting much more from tech companies?

A few steps back in time

It all started from this report from the Guardian:

Apple contractors regularly hear confidential medical information, drug deals, and recordings of couples having sex, as part of their job providing quality control, or “grading”, the company’s Siri voice assistant, the Guardian has learned.

This information came from a whistleblower:

A whistleblower working for the firm, who asked to remain anonymous due to fears over their job, expressed concerns about this lack of disclosure, particularly given the frequency with which accidental activations pick up extremely sensitive personal information.

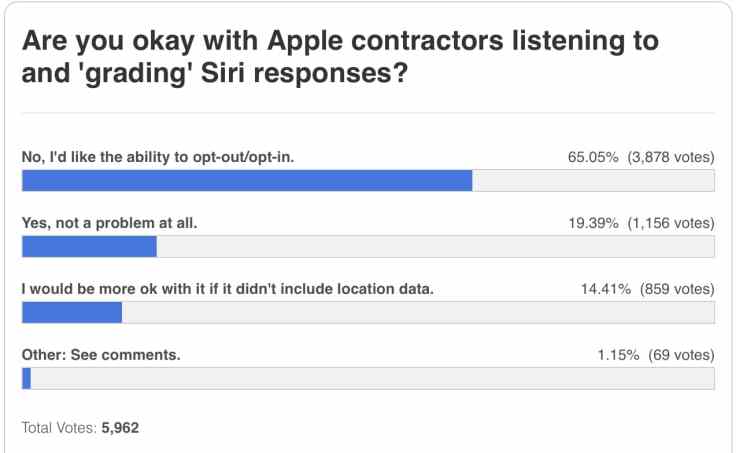

Following this report, a poll by 9to5Mac confirmed people’s expectations:

Well known Apple pundit, Jason Snell :

My feelings about this issue are the same as they are about Amazon: I’m not comfortable with the possibility that recordings made of me in my home or when I’m walking around with my devices will be listened to by other human beings, period. I’d much prefer automated systems handle all of these sorts of “improvement” tasks, and if that’s implausible, I’d like to be able to opt out of the process (or even better, make it opt-in).Jason Snell

What Steve Jobs said about privacy?

In order to answer this question, let’s go back to Steve Jobs’ well known stance on privacy:

Privacy means people know what they’re signing up for, in plain English, and repeatedly… Let them know precisely what you’re going to do with their data.Steve Jobs

In today’s world, an updated version would be: make it clear to the users about this process. Allow people to opt-out of this if they wish to. Make it possible for the users to know if their own recordings we’re listened by real humans even if less than 1% of them are targeted.

Grading Apple’s response

A week later after the news came out:

Apple is suspending the program world wide. Apple says it will review the process that it uses, called grading, to determine whether Siri is hearing queries correctly, or being invoked by mistake.

Did they deliver on their promise? Judging by this press release released a month later, I would say yes in a heartbeat:

« As a result of our review, we realize we haven’t been fully living up to our high ideals, and for that we apologize. As we previously announced, we halted the Siri grading program. We plan to resume later this fall when software updates are released to our users — but only after making the following changes:

- First, by default, we will no longer retain audio recordings of Siri interactions. We will continue to use computer-generated transcripts to help Siri improve.

- Second, users will be able to opt in to help Siri improve by learning from the audio samples of their requests. We hope that many people will choose to help Siri get better, knowing that Apple respects their data and has strong privacy controls in place. Those who choose to participate will be able to opt-out at any time.

- Third, when customers opt-in, only Apple employees will be allowed to listen to audio samples of the Siri interactions. Our team will work to delete any recording which is determined to be an inadvertent trigger of Siri. »

Positive reactions on Twitter

As you can see, Apple wasn’t up to its usual high standards in recent months in regards to our privacy protection. In a similar situation, the challenge is more related to the handling of the problem rather than the problem itself. Apple took its time here to respond in a full and transparent matter. Is this enough to convince everybody? No. Is this better than most tech companies these days? Hell yes. Will you opt-in to help Apple improve Siri?

Must read references

- The Guradian report: Contractors Working on Siri ‘Regularly’ Hear Recordings of Drug Deals, Private Medical Info and More Claims Apple Employee – MacRumors

- 9To5Mac Report: https://9to5mac.com/2019/07/26/siri-privacy-concerns-report/

- TechCrunch Report: https://techcrunch.com/2019/07/26/siri-recordings-regularly-sent-to-apple-contractors-for-analysis-claims-whistleblower/

- 9To5Mac Poll: https://9to5mac.com/2019/07/27/apple-siri-contractors-poll/

- Tidbits short comment: Apple Workers May Be Listening to Your Siri Conversations – TidBITS

- MacRumors reporting about first lawsuit (only two weeks later the news broke out!): Apple Facing Lawsuit for ‘Unlawful and Intentional’ Recording of Confidential Siri Requests Without User Consent – MacRumors

- A small peak behind the scene as reported by the Irish Examiner: Apple contractors listened to 1,000 Siri recordings per shift, says former employee

- Not only Google, Amazon and Apple, Microsoft too: https://www.iphoneincanada.ca/news/skype-recordings/

- The Financial Times on Apple’s apologies: https://www.ft.com/content/2563911e-c9a9-11e9-a1f4-3669401ba76f

- MacStories comment on Apple’s Siri grading program changes: https://www.macstories.net/news/apple-announces-changes-to-siri-grading-program/