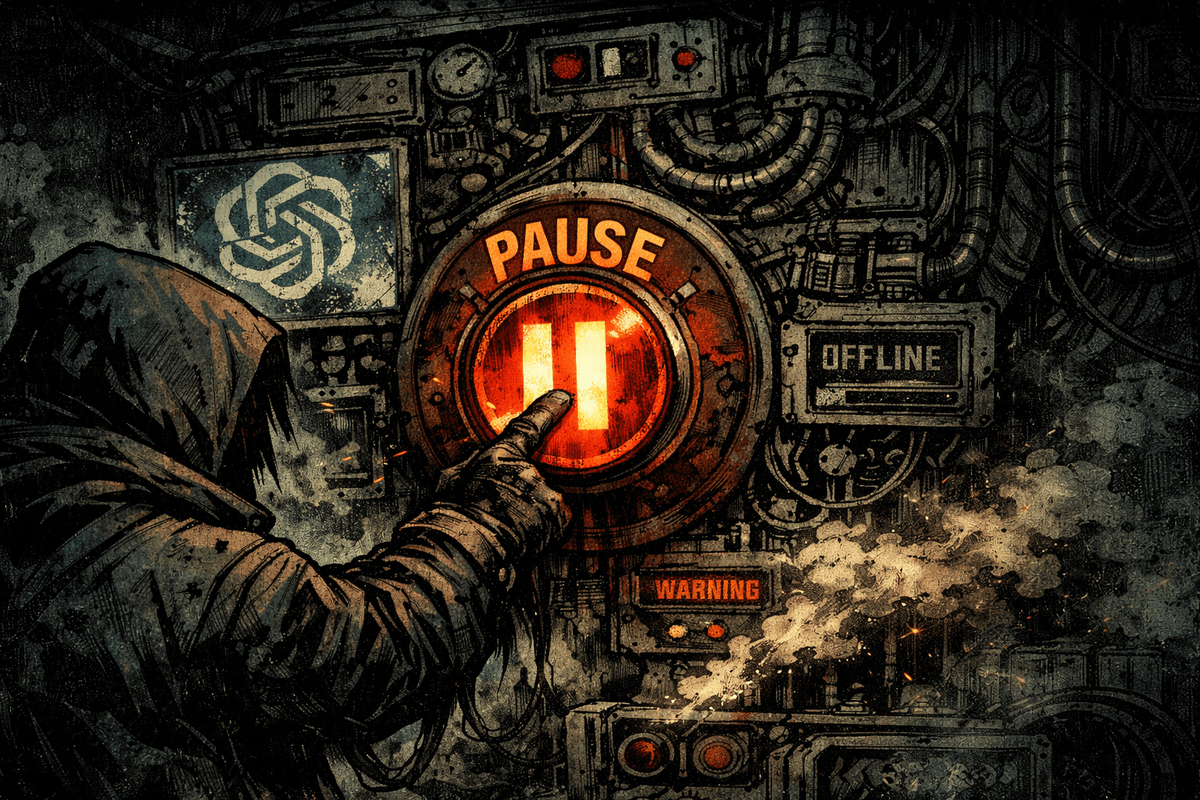

Hitting Pause on ChatGPT

Always have an exit strategy at hand; you never know.

After relying on ChatGPT as a support tool for most of my AI-related creation activities, recent developments prompted a reassessment of my subscription. With Claude already my preferred tool for coding work and viable alternatives for other features, I made the decision to pause my ChatGPT Plus subscription—but the move revealed deeper issues about data portability and vendor lock-in in the AI industry.

Context and Ethical Concerns

Sam Altman's recent comments about potential military applications of OpenAI's technology sparked a re-evaluation of my relationship with the platform. While AI has tremendous potential for positive applications, the possibility of its use in military contexts raised questions about the ethical implications of supporting such development through my subscription. In contrast, Anthropic—led by Dario Amodei, who advocates for more cautious and ethically-grounded AI development—represents a different approach to building AI systems. By shifting my subscription to Anthropic, I wanted to reward a company taking a more principled stance on AI safety and responsible deployment. It's important to regularly reassess our tools and services based on our values, and to support companies that align with those values.

Finding Viable Alternatives

My shift from ChatGPT to Claude AI actually started well before the recent military controversy. Claude has become increasingly popular in the coding community. When I started new personal coding projects, I naturally gravitated toward Claude because of its growing reputation as the better choice for developers. By the time Sam Altman's comments emerged, I had already been using Claude extensively, which made the decision to pause ChatGPT feel less like a protest and more like a logical continuation of a preference I'd already established. I’m being honest and transparent here.

The image generation aspect of ChatGPT was one of the few features I actively used. Upon investigating alternatives, I found that Midjourney—which I had used in the past—is not only still active but remains the superior choice for creative image generation. For roughly a quarter of what I was paying for ChatGPT Plus, I can maintain full image creation capabilities. Other advanced features available through ChatGPT Plus—like Sora for video generation—were never part of my workflow, which actually made the transition easier. Meanwhile, I've been investing time in Claude Code, Anthropic's code execution feature, which offers capabilities that align better with my development needs.

Data Portability and the Challenges of Migration

Switching AI providers is more complex than simply cancelling a subscription. I had to audit all my existing workflows to ensure nothing would break. This exercise revealed a few dependencies ChatGPT had become in some of my automation systems, but it was a straightforward process to migrate to alternatives once I identified them.

The real challenge lies in data portability. I had previously written about the need for a "takeout" option for ChatGPT (see “A Case for ChatGPT Takeout”), similar to Google's data export feature. One of the most practical issues when leaving a service is extracting your data and conversation history. Discovering that Anthropic provides a custom prompt for importing AI provider memory into Claude was both validating and helpful, though I wish ChatGPT had official support for this kind of migration.

Beyond individual conversation memories, there's a broader systemic issue with how AI providers handle past conversations. Most platforms lock conversations into their own ecosystems with no standardized way to export, archive, or migrate them to another provider. This is particularly problematic for users who may have years of conversations containing valuable insights, code snippets, or creative work. Unlike email or social media platforms, where data portability is increasingly expected, AI providers have been slow to adopt similar standards. The conversations you have with ChatGPT remain ChatGPT's conversations—accessible only through their interface and on their terms. When you leave, you leave that history behind.

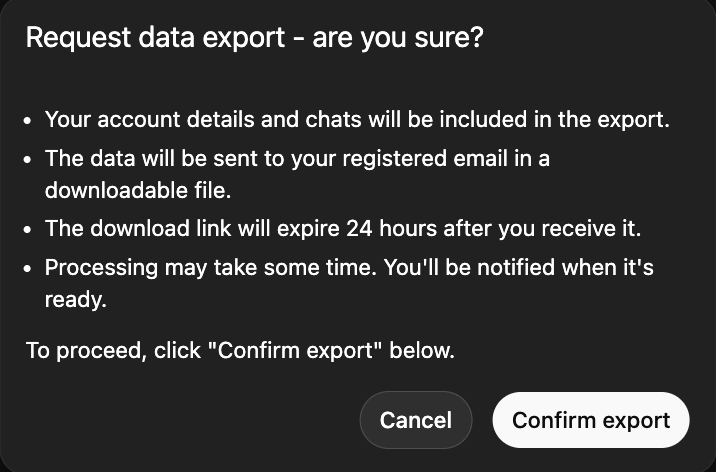

A recent article from Techcrunch points to a way to export past conversations, too, by visiting the ChatGPT Settings panel as shown here:

What's Next?

I've used Claude extensively for coding work, but the next few weeks will be interesting as I explore how it handles non-coding prompts and more personal inquiries. There's something intriguing about discovering whether different AI models have distinct "personalities" or approaches to problem-solving. Does Claude respond differently to creative requests compared to ChatGPT? Does it have different strengths when it comes to writing, brainstorming, or exploring abstract ideas? These are questions I'm genuinely curious to investigate. The differences between models might reveal something fundamental about how these systems are trained and what values—intentional or otherwise—are baked into them.

Ultimately, the decision to pause ChatGPT was about aligning my tools with my values. It's a reminder that even as we become dependent on services, maintaining flexibility and periodically evaluating alternatives keeps us in control of our digital lives. The shift also highlights an opportunity to be more deliberate about the AI tools I use and what I learn from comparing them.